Don't Steal This Book

- by Michael Stillman

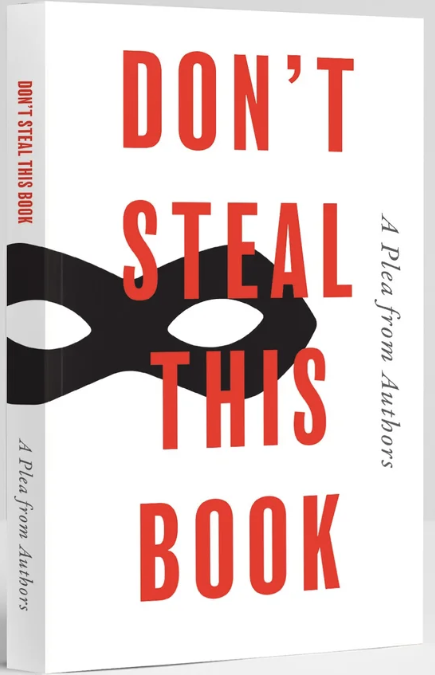

Don't Steal This Book.

Authors and publishers have been unwillingly forced into a game of whac-a-mole with those who use or steal their copyrighted works without payment. There are two versions. One is a blatant case of theft, but where the thieves are hard to locate or prevent from rising up again if they are. The second may not clearly be a case of legal theft, but the victims see it as at minimum a moral case. This one may be resolvable by settlement or it may end up in the hands of the courts to decide.

The first point to recognize is that AI requires enormous amounts of data to function. It needs the data for “training,” which means to have the information necessary to answer virtually every question anyone can think of to ask. AI sites like OpenAI's ChatGPT, Google's Gemini, and Anthropic's Claude, gather much of their data from public sites. Non-copyrighted articles and news sites, learned scientific and other papers, discussion boards, even social media are fair game, though more verification is needed for the discussion boards and social media to be trusted. Books out of copyright, such as those found on HathiTrust and Google Books, can legally be copied and used to “train” AI too. However, copyrighted works, often found online but behind a paywall, may not be copied without permission.

As our topic is books, all but very old ones are subject to copyrights. They may not legally be copied without permission.

The first case that came up recently is that of Anna's Archive. This is a pirate site. There is little pretense that what they do is legal, and if its anonymous owners believed it was, they would not be anonymous. You can't find out where they are located, and when their url is blocked they simply switch to a new one. As for Anna, there is no such person. It's a made-up name.

What Anna's does is to copy practically every book it can find online. It then offers the books for download, either free or for a “contribution.” Contributions need to be made in bitcoin as that is untraceable. What makes Anna's Archive, or other pirates like LibGen (Library Genesis) so desirable for AI is that they can gain access to millions of books all together. They don't have to go out searching for them one-by-one. The free aspect is the cherry on the top since the AI sites would have to pay a lot to access all these books on a royalty basis. Reportedly, Anna's Archive is demanding bitcoin “contributions.” Meta is currently being sued by authors and publishers for using LibGen to “steal” their copyrighted books for Meta's Llama AI.

In this latest case, 14 publishers have banded together to go after the source of the theft, rather than the ultimate user. The suit is against Anna's Archive. The suing publishers are Hachette Book Group, HarperCollins, Macmillan Publishing Group, Penguin Random House, Simon & Schuster, Apress Media, Cengage Group, Elsevier, John Wiley & Sons, Bedford, Freeman & Worth Publishing Group, McGraw Hill, Pearson Education, and Taylor & Francis Group. Anna's Archive was previously sued by Atlantic records for stealing audio files. At the time they claimed Anna's Archive had stolen 61,344,044 books and 95,527,824 papers. Another 2 million books and 100,000 papers have been added since then according to Parade Magazine.

If past suits by publishers are a guide, what can be expected is that publishers will win. They will win by default judgment which is what you get if the defendant does not show up to defend itself. The publishers will then have to figure out how to enforce its judgment against a party it cannot find (it is believed that at least some of these pirate sites are in Russia and good luck going after them there). Anna's url may be blocked but the pirate will just set itself up again on another url. That is what happened to Atlantic and other record companies along with Spotify whose site was hacked for the music. They won an injunction and several Anna's sites were taken down but they just moved to new ones.

The second case involves the AI search engines using authors' copyrighted books to train their models, that is, provide answers to users' questions. These are the ones who purchased or otherwise obtained the stolen book files from Anna's Archive or other similar sites. You have undoubtedly used these engines such as ChatGPT and Gemini. We noted the suit against the likes of Anna's Archive that steals these authors works. But, what about the end users? Maybe they didn't steal the authors' books, but they are in effect using that stolen merchandise. The AI search engines know they are using books effectively stolen, but do so anyway. Maybe they think there's no other practical way to gain access to millions of books, but need is not a defense for theft.

British authors came up with a plan to at least embarrass those stealing their work. They published a book specially for the London Book Fair. The title is Don't Steal This Book. It was written by nearly 10,000 authors. Don't Steal This Book must be a very large book with all these contributors. Not really. It is almost a blank book. All it contains are the authors' names. All these great writers and all you get is a long, excruciatingly boring read. But, it's not meant to be read. It's meant to make a point.

Their point is that if authors aren't compensated for their work, they will write no more books. They need to eat just like the rest of us and food costs money. New books will be blank pages. The back cover reads, “The UK government must not legalise book theft to benefit AI companies.” The book is aimed at proposed legislation in the UK that in certain cases lets the AI search companies use copyrighted works unless the author opts out, instead of requiring the AI company to first seek permission.

Book organizer Ed Newton-Rex was quoted as saying, “This is not a victimless crime – generative AI competes with the people whose work it is trained on, robbing them of their livelihoods. The government must protect the UK’s creatives, and refuse to legalise the theft of creative work by AI companies.”